Gallery

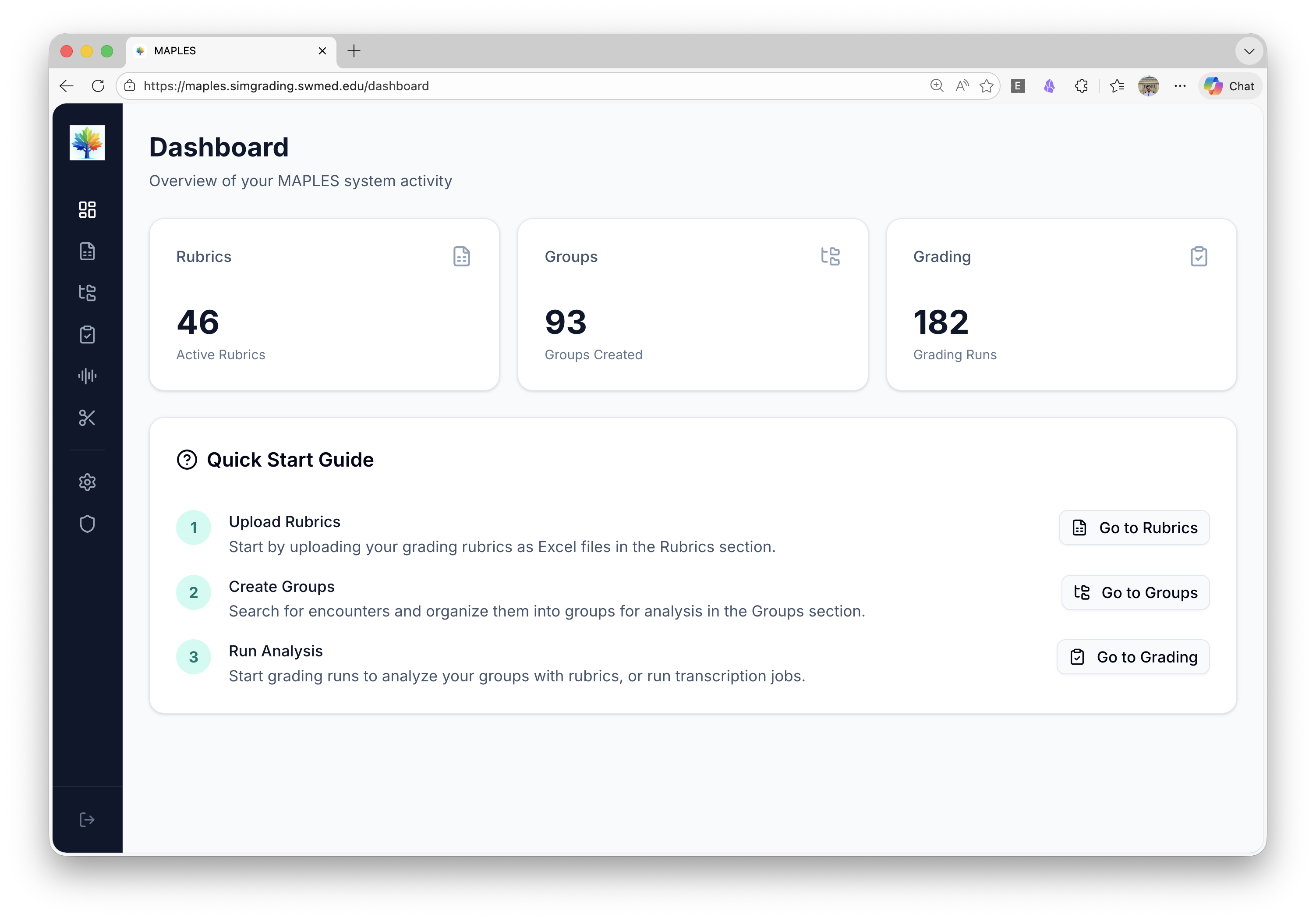

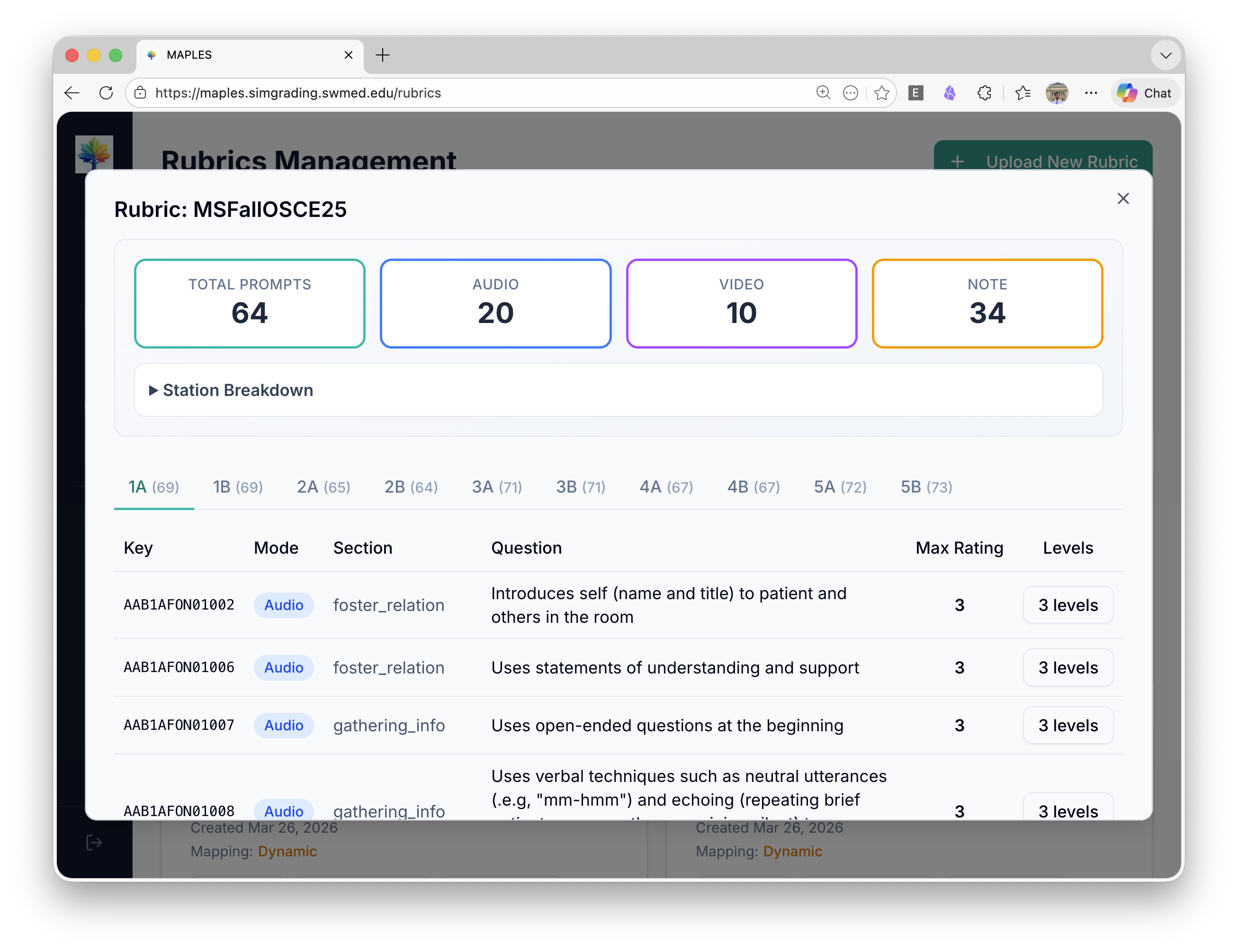

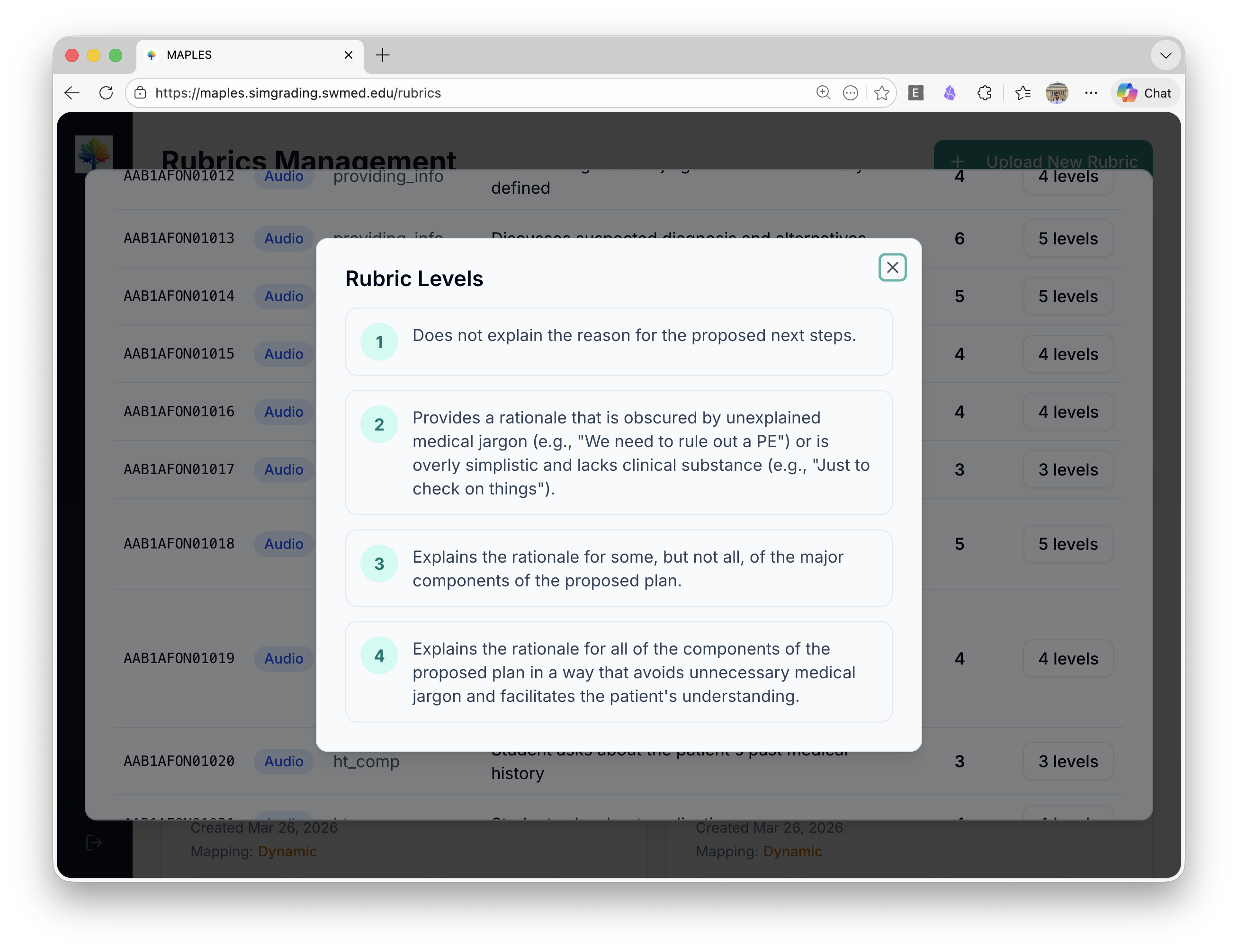

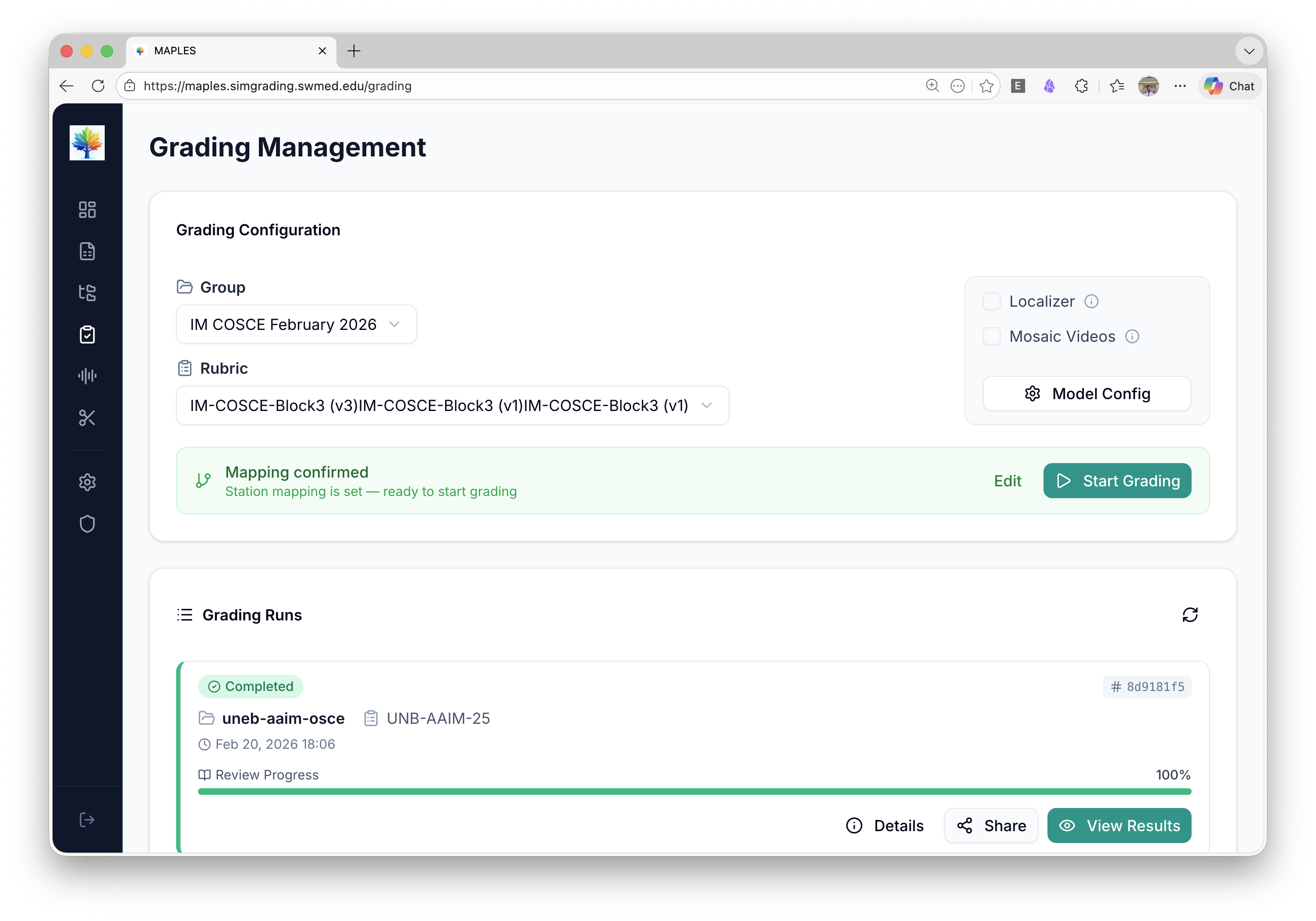

See the Platform in Action

Screenshots, architecture diagrams, and published figures from the MAPLES AI grading platform and the OSCE simulation environments it serves.

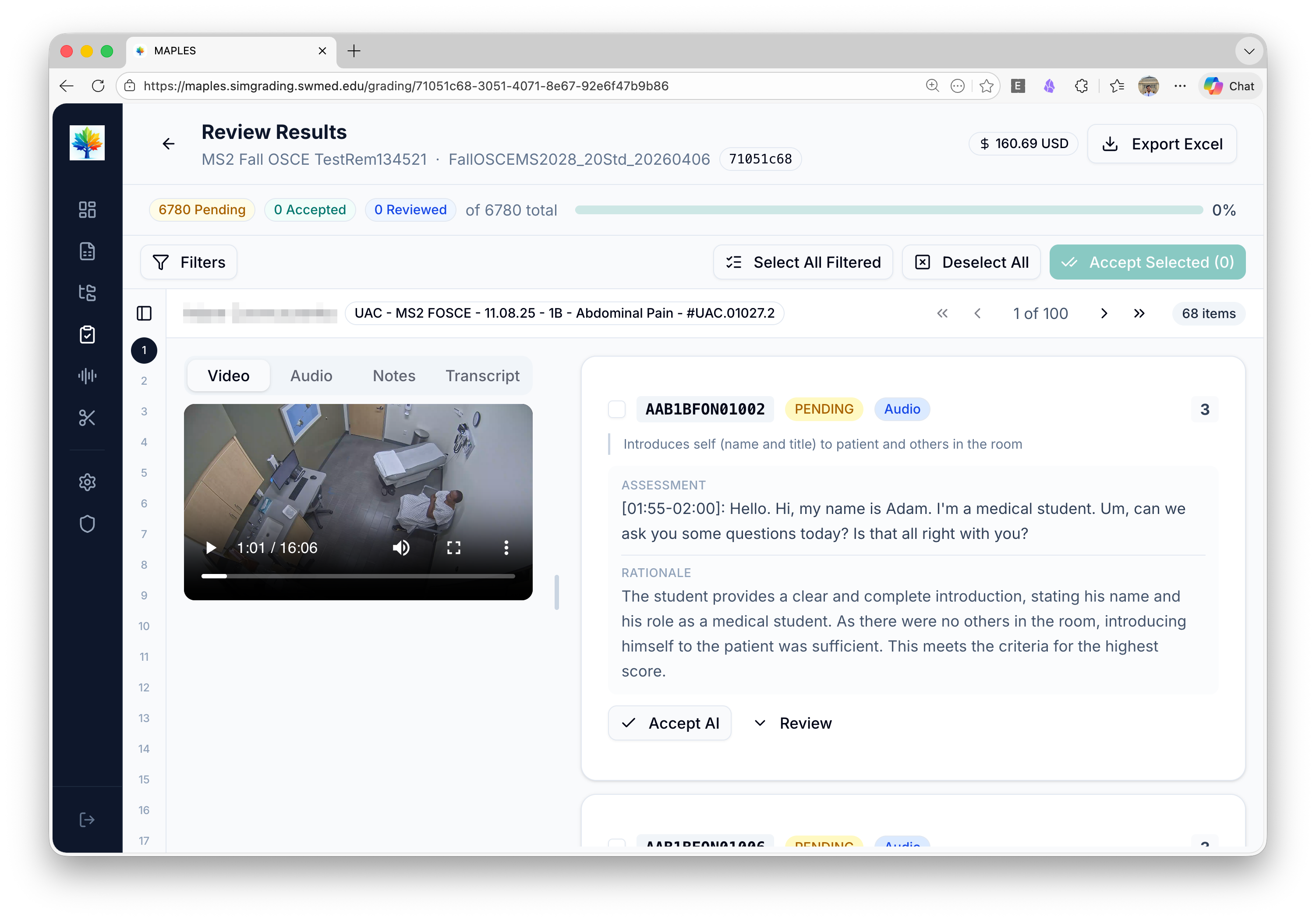

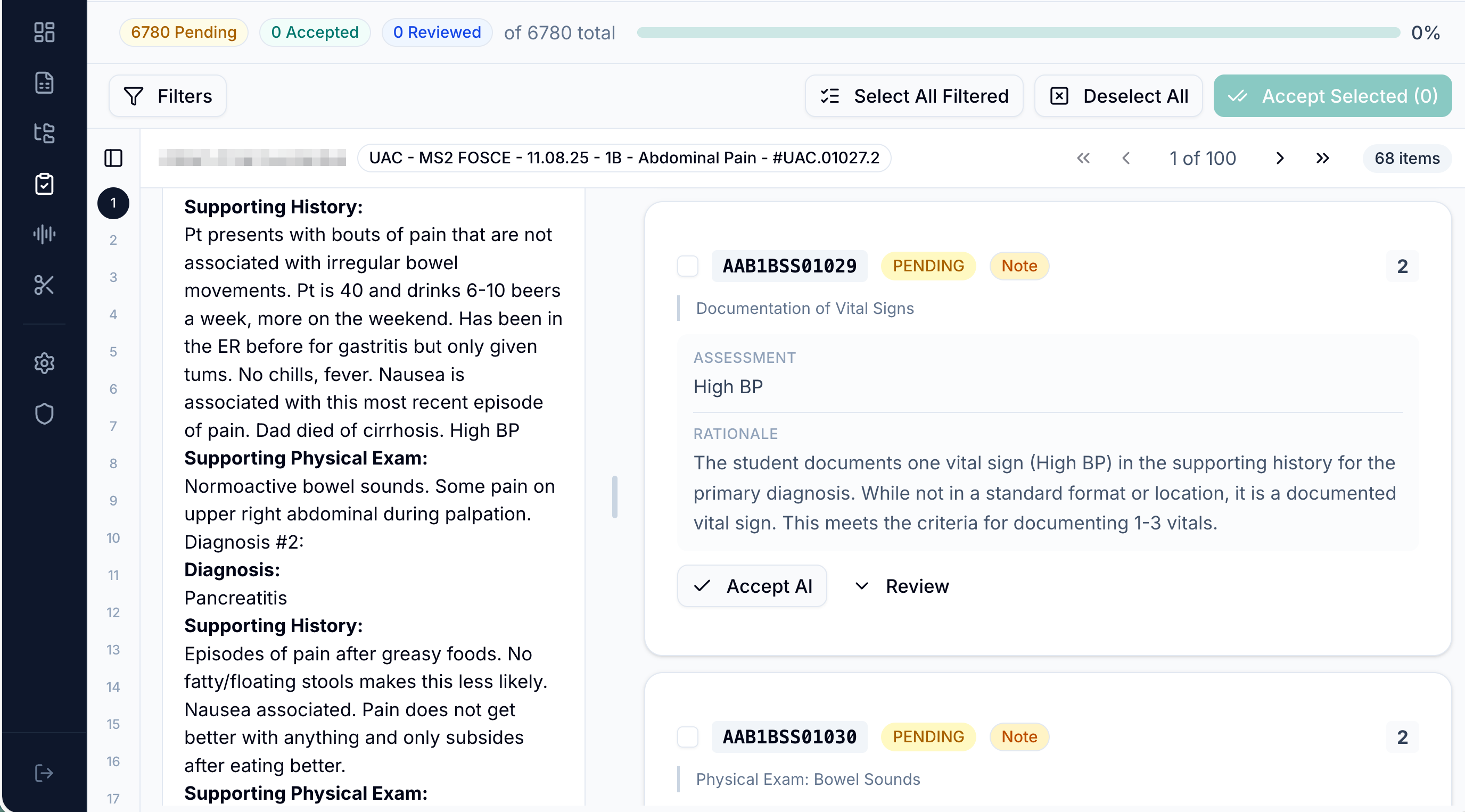

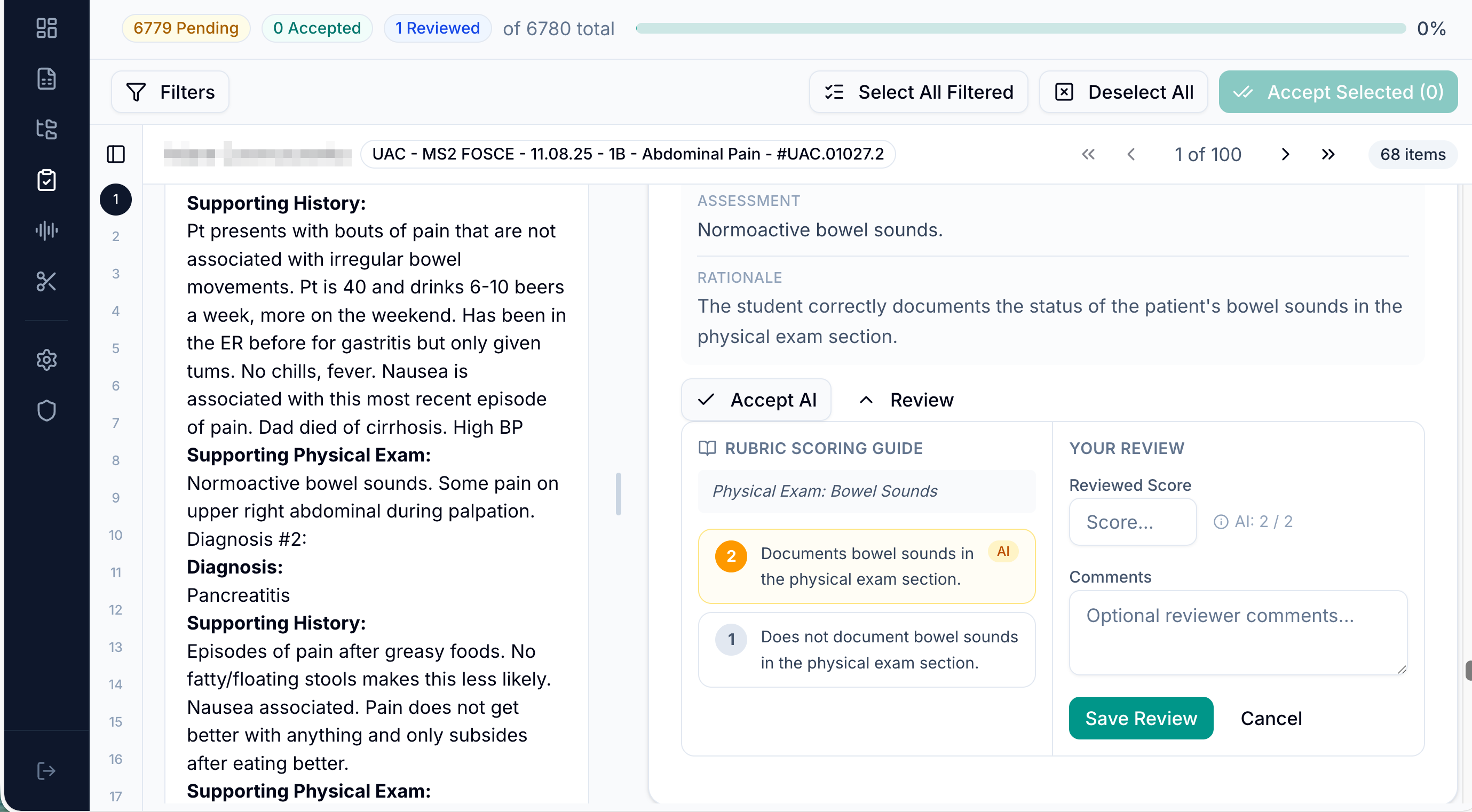

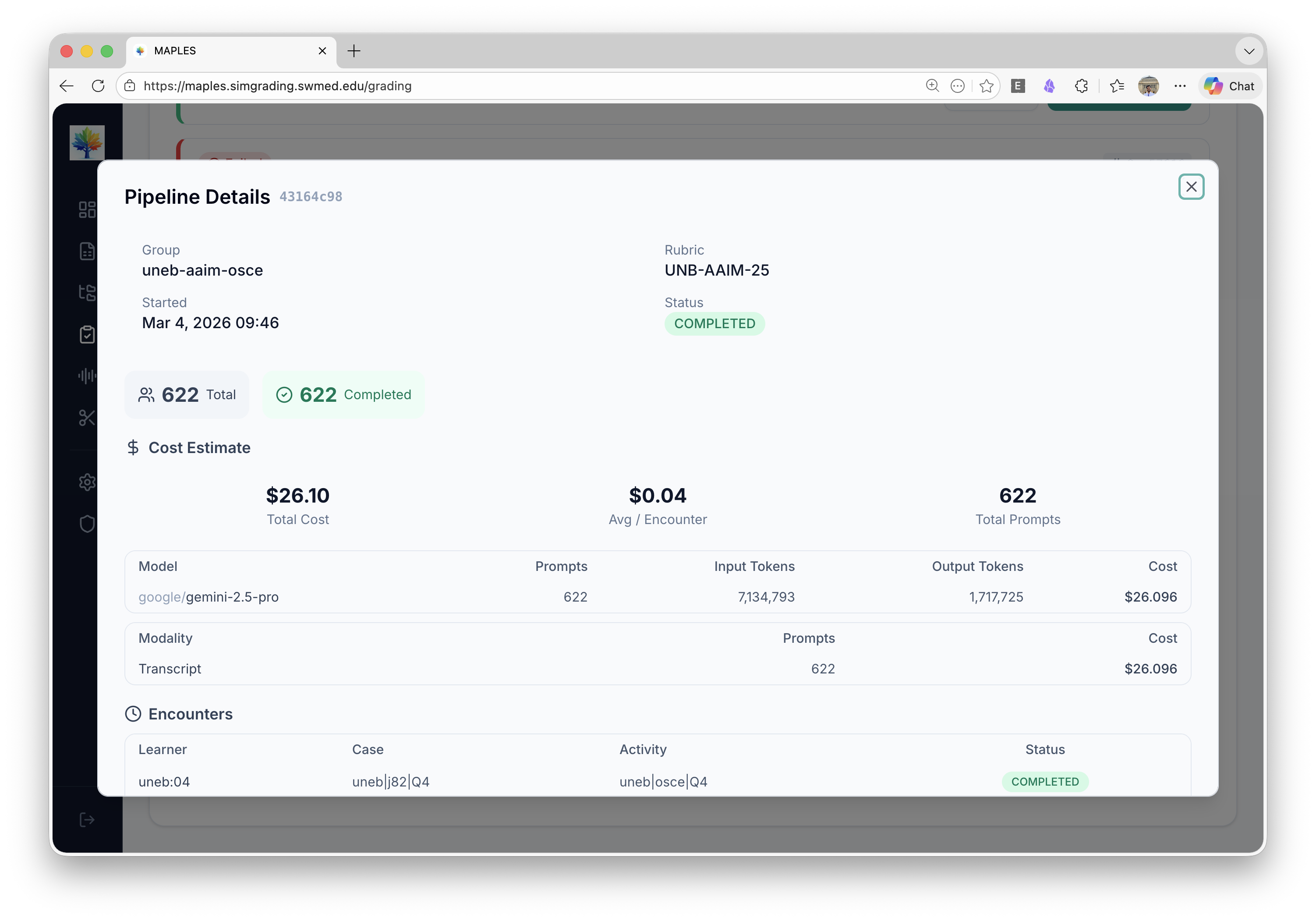

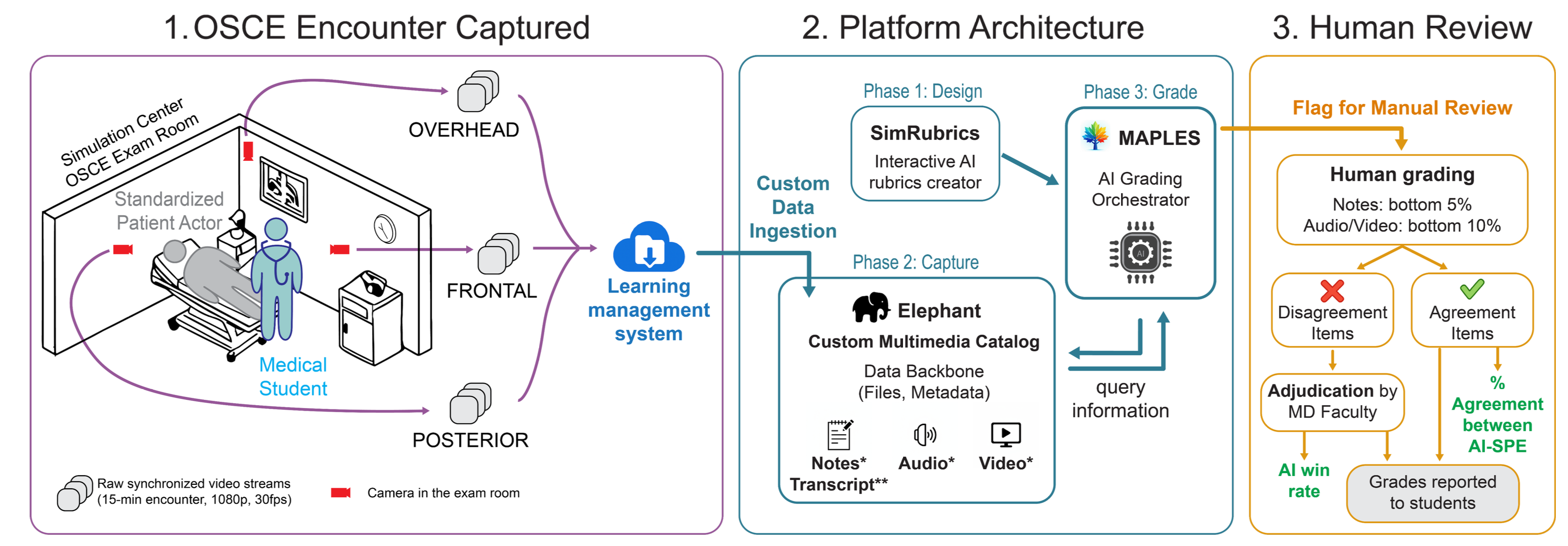

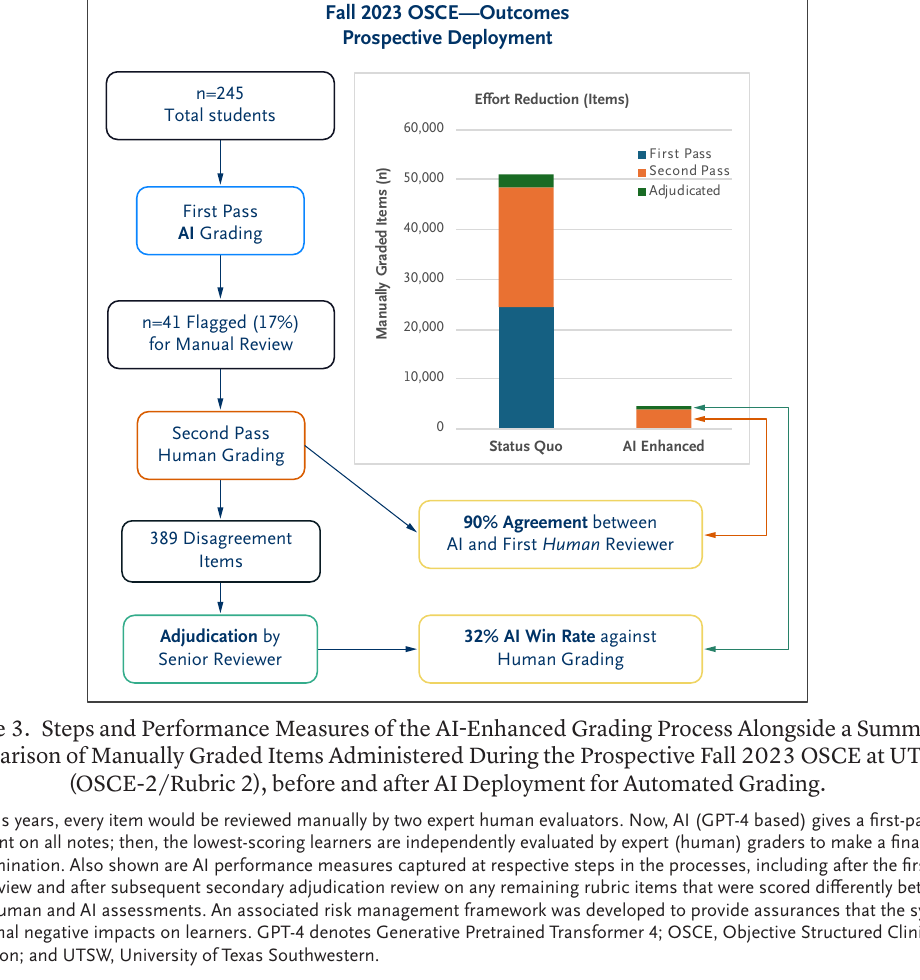

MAPLES Platform

The AI grading interface used by faculty and simulation staff.

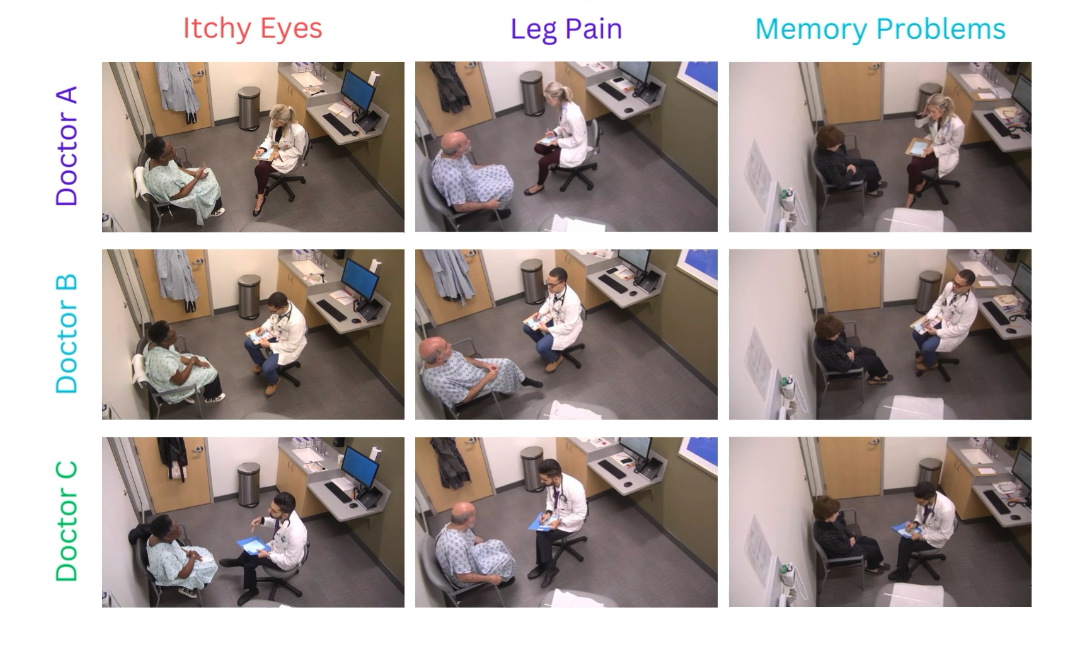

OSCE Setup & Simulation Environment

The clinical simulation environments where encounters are captured.

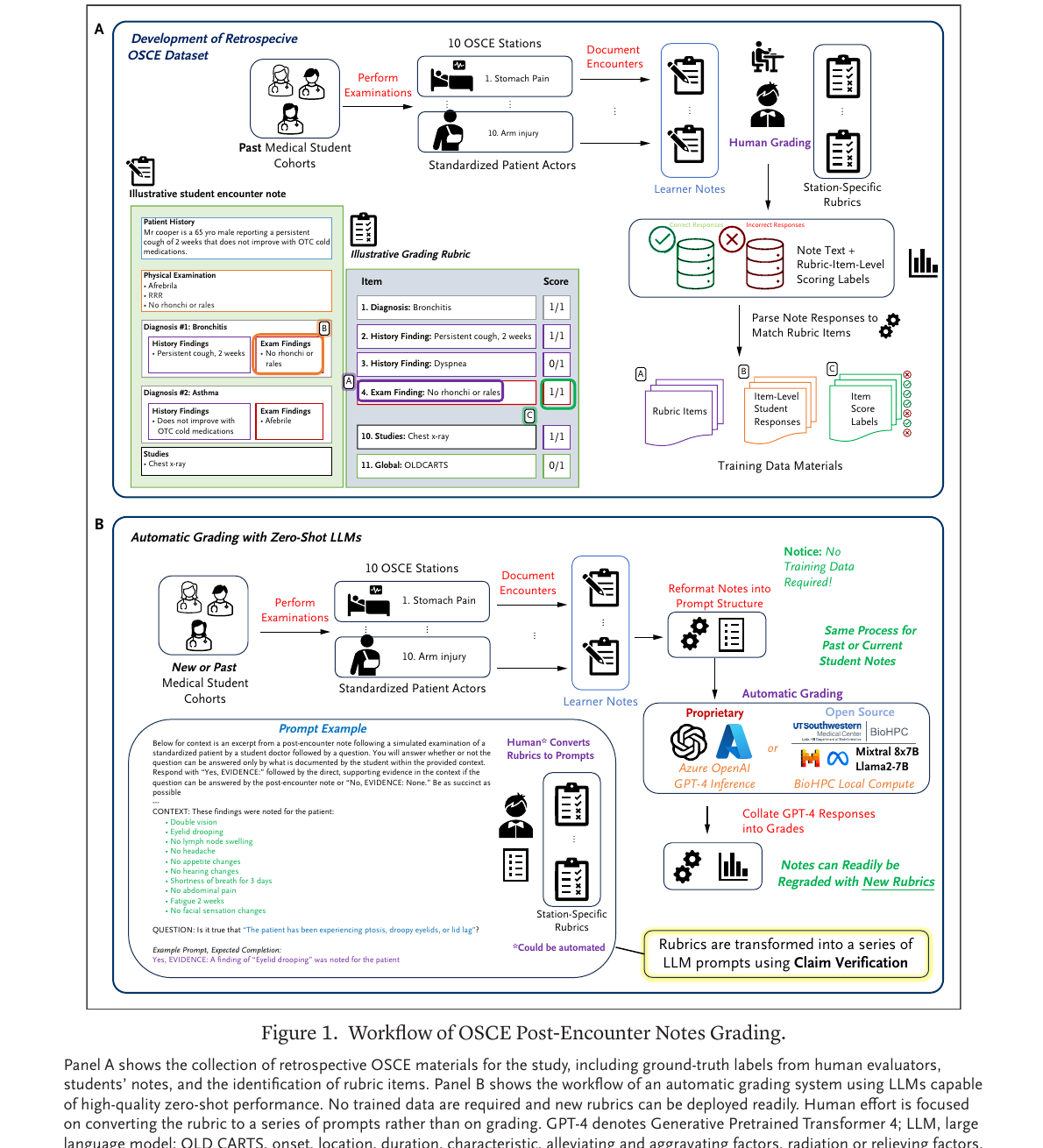

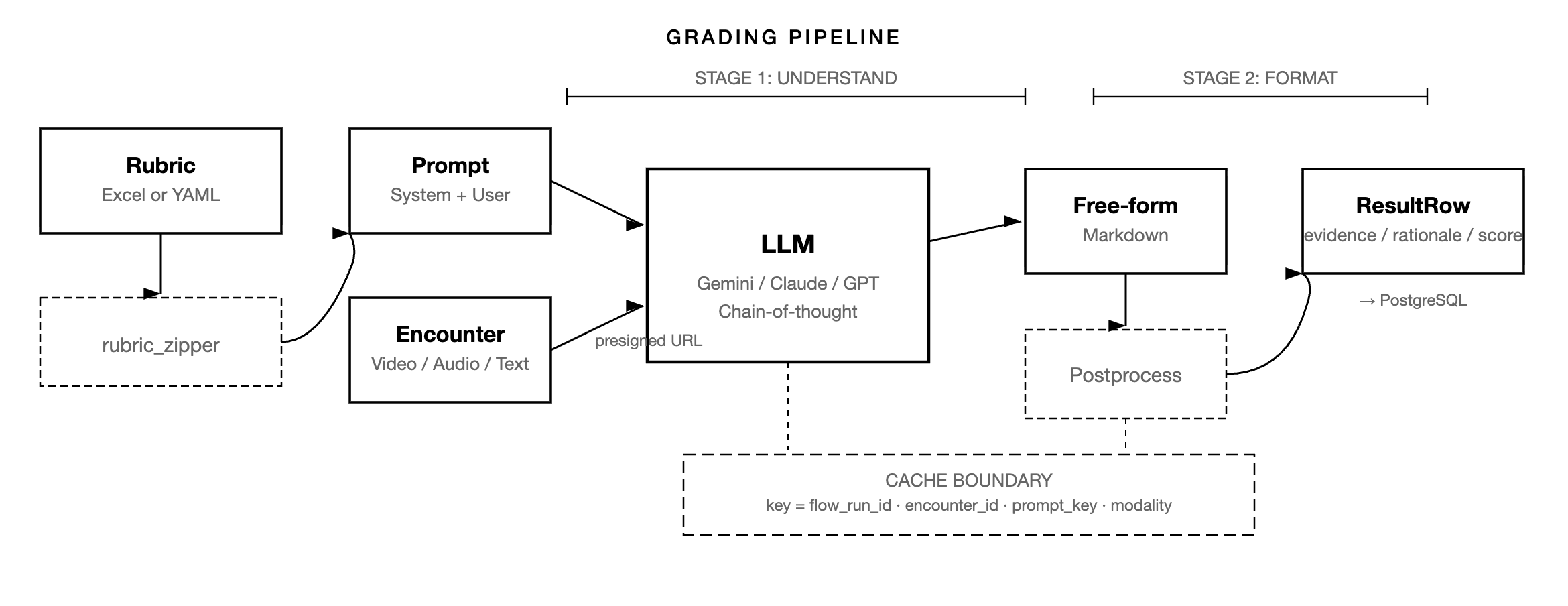

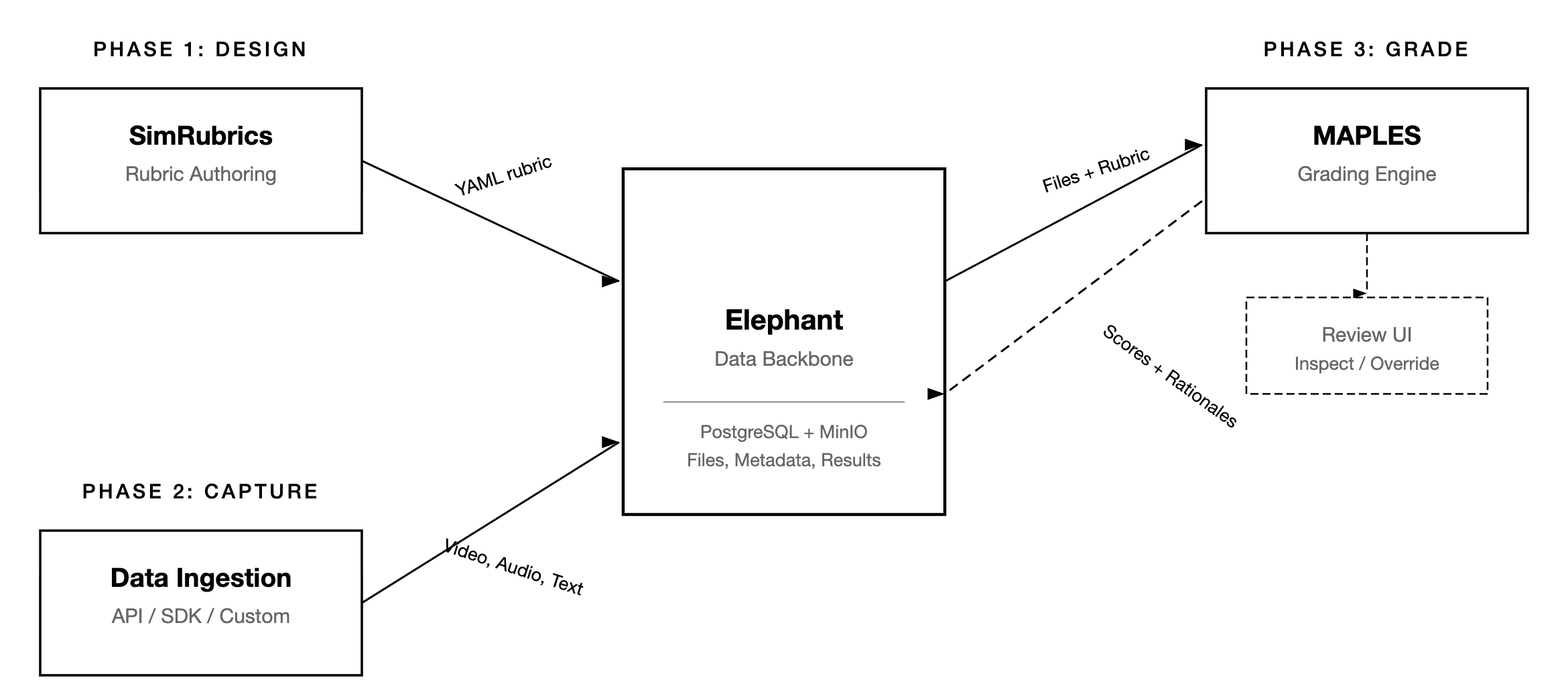

Platform Architecture & Published Figures

From the NEJM AI publication and technical report — the rubrics-to-prompts pipeline.

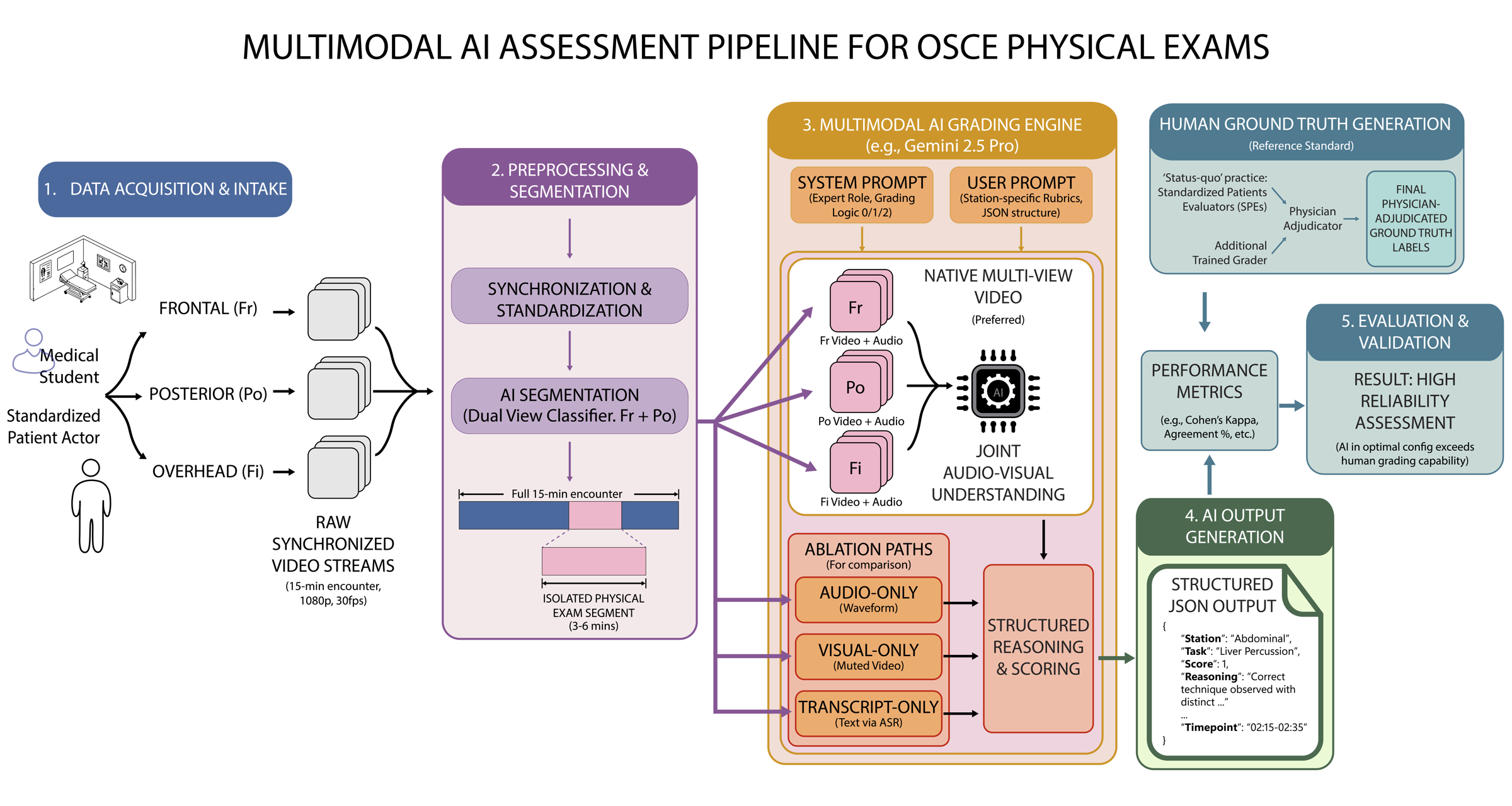

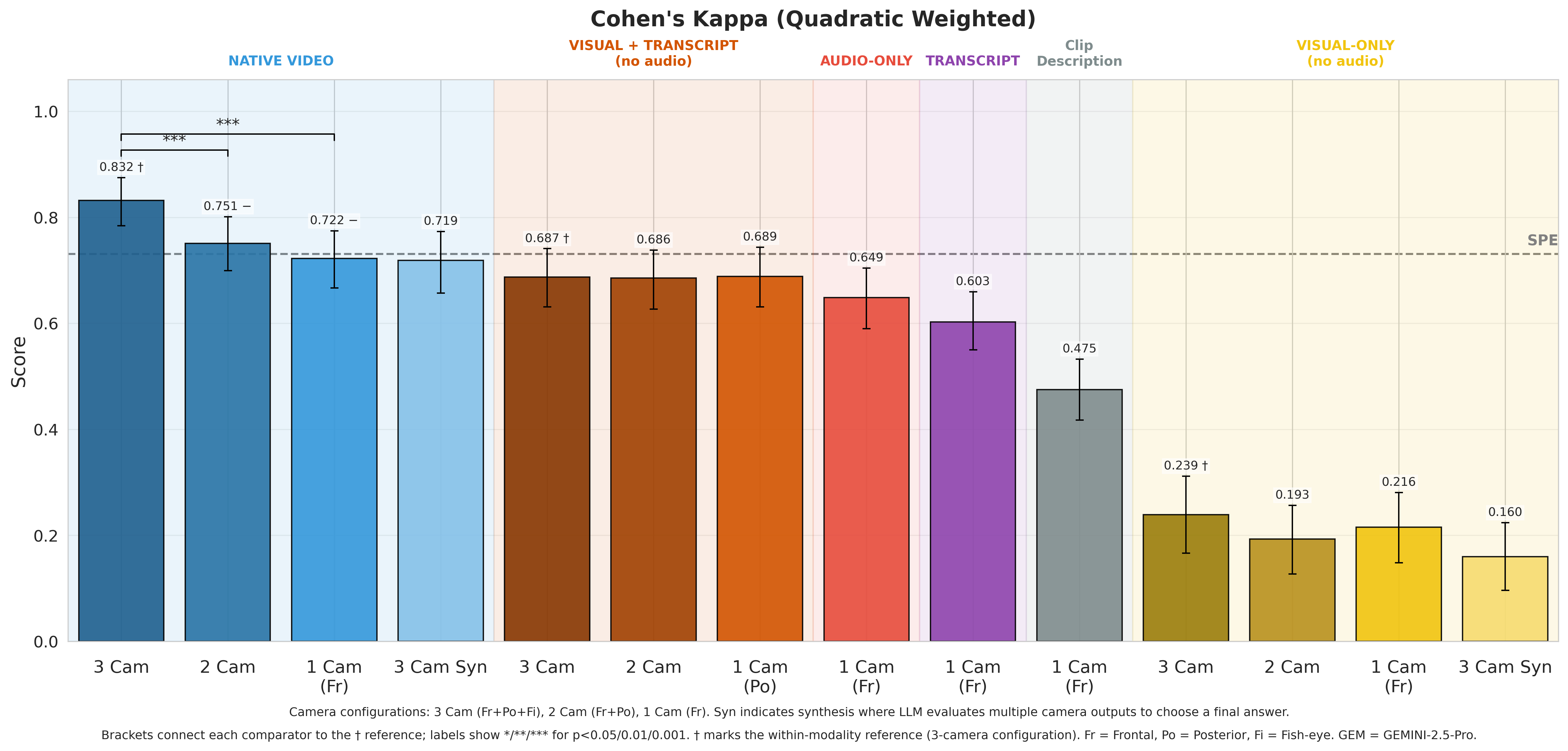

Multimodal AI Assessment

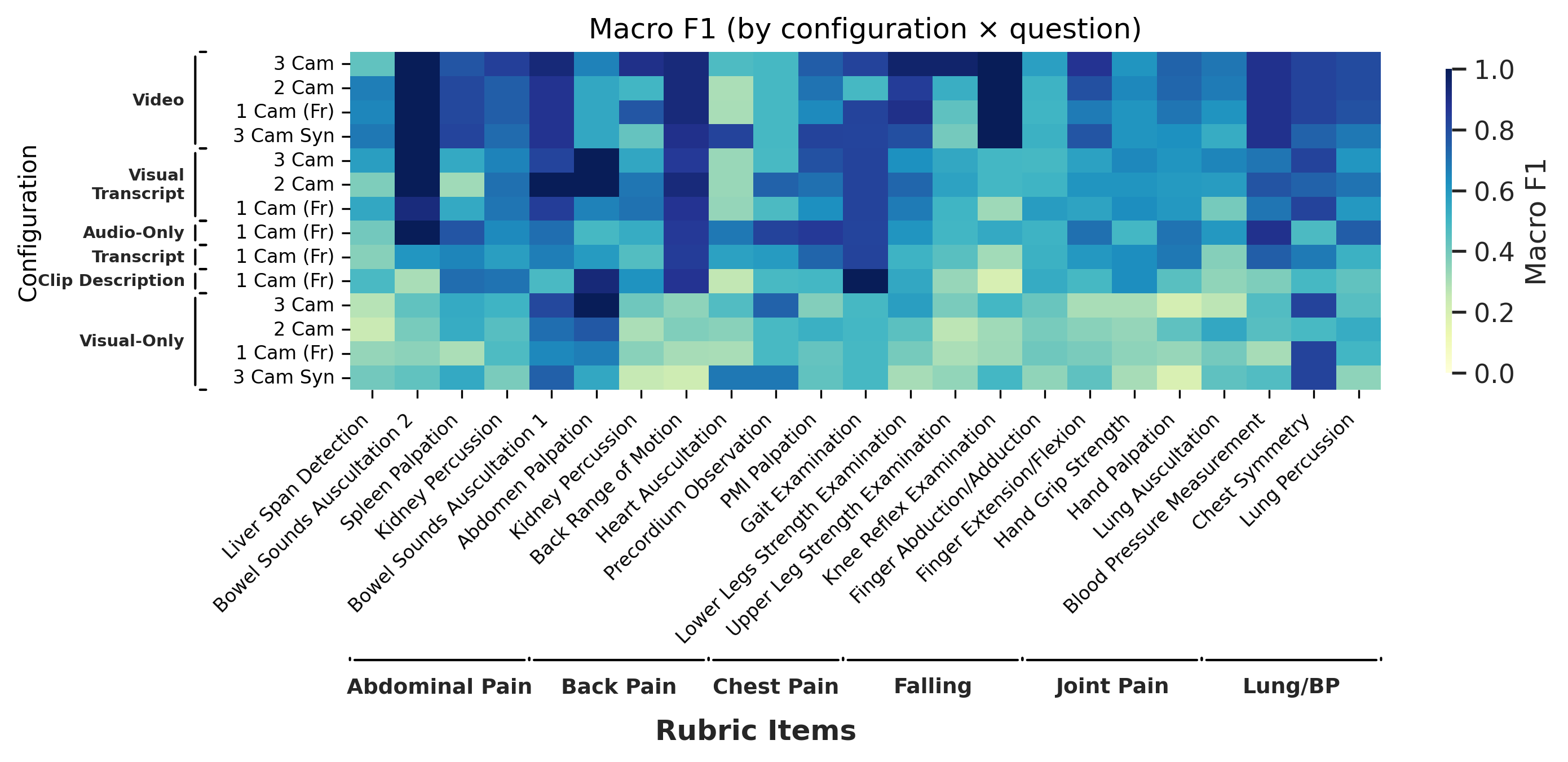

Multi-camera video analysis using Gemini — from the multimodal physical exam preprint (Kang et al., 2026).

More screenshots of the MAPLES interface and OSCE simulation environments coming soon. Have questions about the platform? Get in touch.